From Coarse to Fine-Grained Open-Set Recognition

Abstract

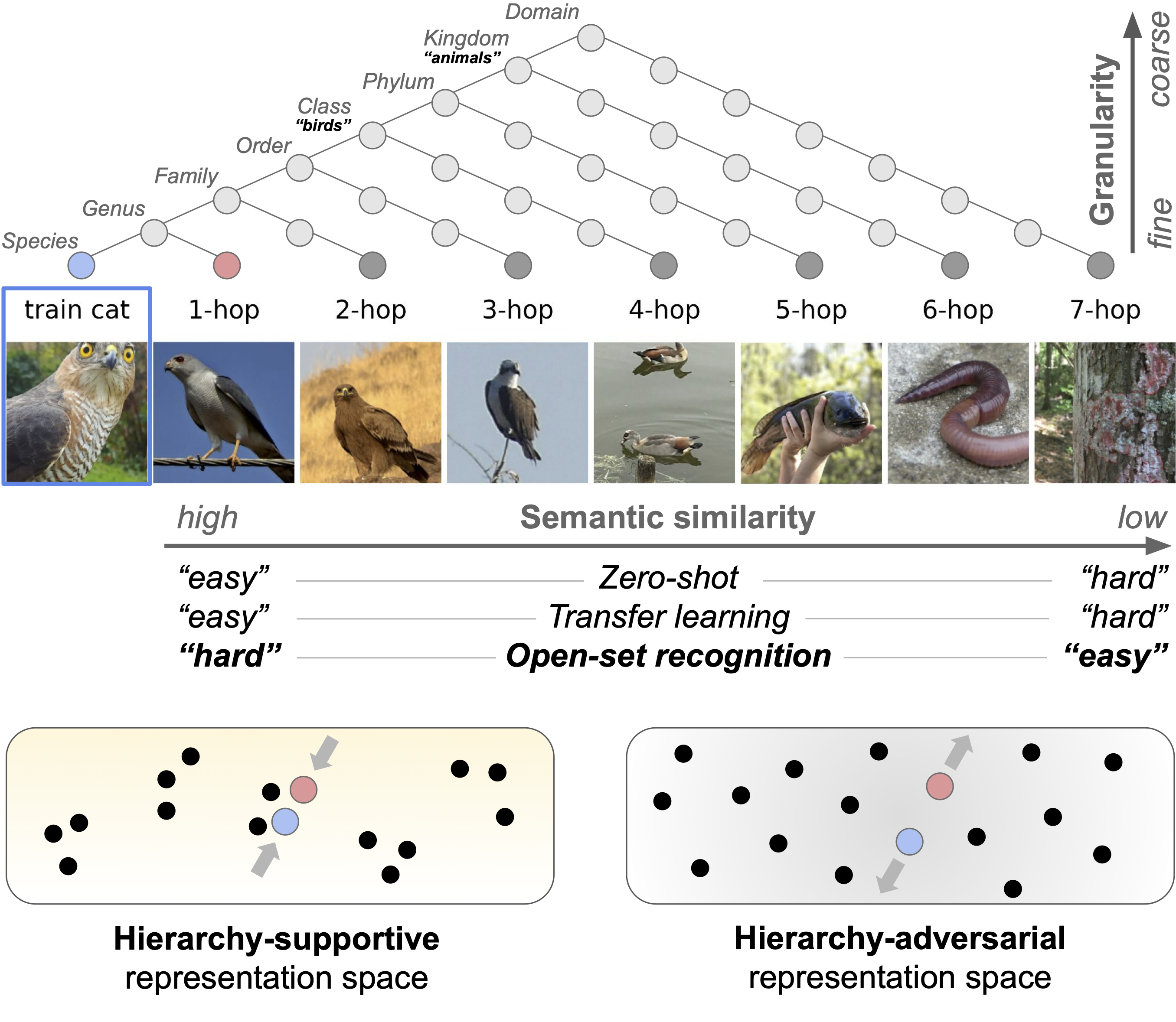

Open-set recognition (OSR) methods aim to identify whether or not a test example belongs to a category observed during training. Depending on how visually similar a test example is to the training categories, the OSR task can be easy or extremely challenging. However, the vast majority of previous work has studied OSR in the presence of large, coarse-grained semantic shifts. In contrast, many real-world problems are inherently fine-grained, which means that test examples may be highly visually similar to the training categories. Motivated by this observation, we investigate three aspects of OSR: label granularity, similarity between the open- and closed-sets, and the role of hierarchical supervision during training. To study these dimensions, we curate new open-set splits of a large fine-grained visual categorization dataset. Our analysis results in several interesting findings, including: (i) the best OSR method to use is heavily dependent on the degree of semantic shift present, and (ii) hierarchical representation learning can improve coarse-grained OSR, but has little effect on fine-grained OSR performance. To further enhance fine-grained OSR performance, we propose a hierarchy-adversarial learning method to discourage hierarchical structure in the representation space, which results in a perhaps counter-intuitive behaviour, and a relative improvement in fine-grained OSR of up to 2% in AUROC and 7% in AUPR over standard training.

Methodology

We make the following contributions:

- We demonstrate that the choice of the best scoring rule depends on the semantic similarity between the closed- and open-set and that familiarity scores perform best in fine-grained OSR.

- We explore the impact of supervision granularity and show that fine-grained supervision improves OSR per- formance even for large semantic shifts.

- We explore the role of hierarchical representations and introduce hierarchy-adversarial learning to improve fine-grained OSR by discouraging hierarchical structure.

- We introduce iNat2021-OSR, a benchmark with curated open-set splits for the iNat2021 dataset for two taxa: birds and insects. This enables the study of OSR along seven discrete hops that encode the semantic distance from coarse-grained (7-hop) to fine-grained (1-hop).

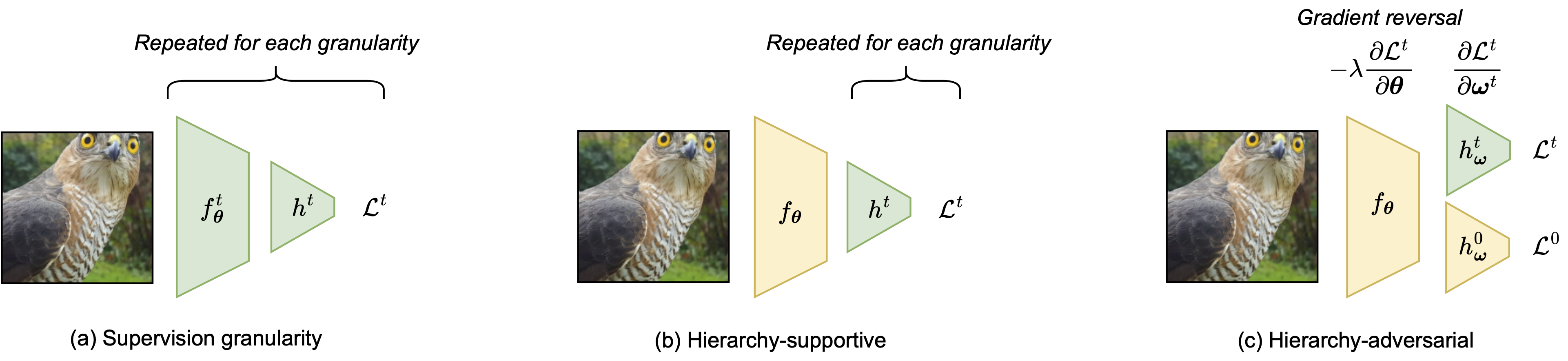

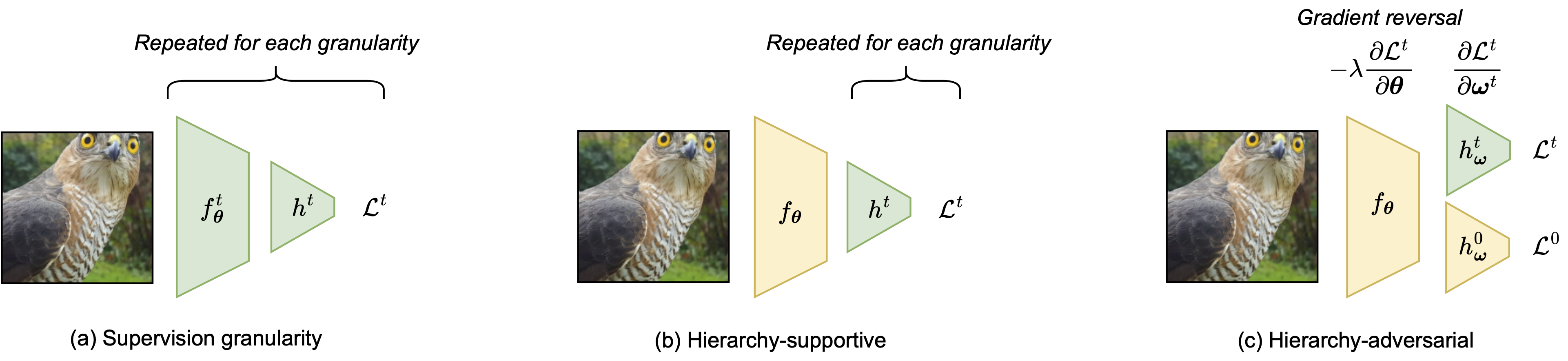

Training strategies. We train and compare learning strategies with (a) separate models for each granularity (supervision granularity), (b) single models that predict all levels of granularities (hierarchy-supportive), and (c) single models that discourage prediction capabilities of all but the finest granularity (hierarchy-adversarial). To discourage hierarchical structure in the representations, we use a gradient reversal layer from unsupervised domain adaptation, to reverse gradients for the classification heads of coarser granularities.

Citation

Nico Lang, Vésteinn Snæbjarnarson, Elijah Cole, Oisin Mac Aodha, Christian Igel, and Serge Belongie. (2024) From Coarse to Fine-Grained Open-Set Recognition. Computer Vision and Pattern Recognition (CVPR), accepted

@inproceedings{lang2024osr,

title={From Coarse to Fine-Grained Open-Set Recognition},

author={Lang, Nico and Snæbjarnarson, Vésteinn and Cole, Elijah and Mac Aodha, Oisin and

Igel, Christian and Belongie, Serge},

booktitle={Proceedings of the IEEE/CVF conference on computer vision and pattern recognition},

year={2024}

}